Everyone has an opinion on what AI does to human thinking. Most of those opinions showed up early, before there was enough real usage to observe, let alone measure.

Now there’s data. And it doesn’t support the simple narratives. AI doesn’t quietly erode creativity, nor does it magically enhance it. It changes how thinking happens.

When effort drops in one part of the process, it doesn’t disappear; it goes somewhere else. Sometimes toward better decisions and sometimes toward shortcuts that look efficient but weaken the outcome.

That distinction matters more than it seems.

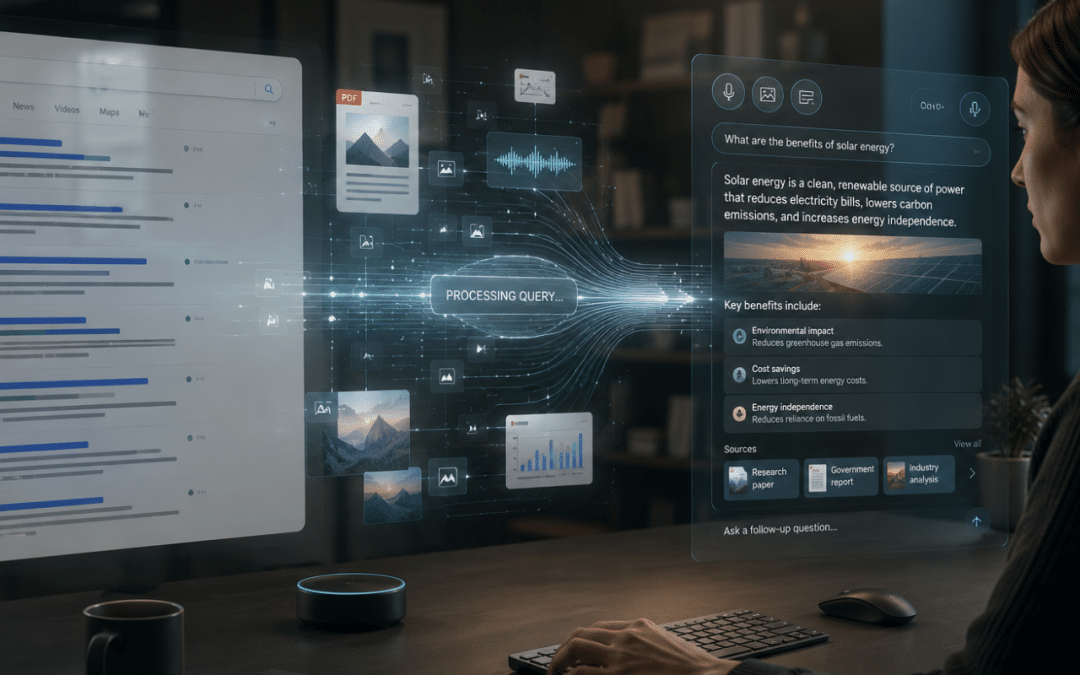

If your role involves shaping ideas, defining messages, or influencing decisions, you’re already working alongside systems that participate in thinking, not just execution. They suggest directions, frame options, and in some cases, subtly guide conclusions. So the real question becomes: where does AI actually help, and where does it quietly get in the way?

Timing is everything

Research showed a clear pattern: people who worked through a problem before turning to AI performed better on critical thinking tasks than those who started with it. Under time pressure, early AI use helped. Without that pressure, the result often weakened.

So the variable isn’t the tool. It’s when it enters the process.

That timing changes how ideas form. Early use reduces the effort needed to define the problem. Late use builds on work that already exists. One gives you direction quickly. The other improves the direction you’ve already chosen.

There’s a cognitive cost to skipping the early stage. That initial effort (framing the problem, deciding what matters, setting constraints) is where much of the quality comes from. If AI handles that part, the output can look finished but lack depth.

For marketers, this shows up in familiar ways. Work comes together faster, but starts to feel interchangeable. Messaging is clean, yet less distinct. Speed improves, but clarity often suffers.

Early AI use: fast structure, weaker thinking

Starting with AI often means opening a chat and asking for “3 campaign ideas,” “a LinkedIn post,” or “a landing page structure” before the problem is fully defined.

You get options instantly. Angles, hooks, even full drafts. All these are useful when timelines are tight, but there’s an important nuance to keep in mind: those outputs come with built-in assumptions.

The campaign direction is already framed, the audience is generalized, and the messaging leans on patterns the model has seen before. From that point on, you’re editing within that frame instead of deciding what the frame should be.

The issue isn’t quality at surface level. It’s that the thinking behind it was partially skipped.

In marketing workflows, this shows up as:

- ICPs that feel “close enough” but don’t reflect real decision drivers

- value propositions that sound familiar across competitors

- content that performs decently but doesn’t build a clear position over time

When AI defines the starting point, you save time upfront but lose depth in the parts that actually make the work effective.

Late AI use: sharper thinking, stronger output

When AI comes in later, the workflow looks different.

You already defined the ICP and chose the campaign angle. The message has a point of view, even if it’s still rough. There’s something to work with.

At that stage, AI becomes a tool for pressure-testing, not direction-setting.

You can ask:

- Does this positioning hold up against competitors?

- Where does this argument break?

- How would a CFO vs. a Head of Ops read this differently?

- What objections are missing here?

Now the AI is reacting to your thinking, not replacing it, which drastically changes the quality of the outcome.

AI still speeds things up, just in a different place.

Your brain is charging you for the shortcut

There’s a term in cognitive psychology worth paying attention to: cognitive offloading. It’s the habit of pushing mental effort onto external tools so you can move faster. On paper, that sounds like progress. In practice, it comes with trade-offs that show up in the quality of your work.

Studies on AI usage in academic settings point to a pattern: heavy users tend to skim, summarize, and move on. They engage less with the material itself. The issue isn’t access to information, but depth of processing. When thinking becomes optional, it often gets skipped.

For marketers, this shows up in subtle ways. Messaging starts to feel interchangeable. Campaign ideas look polished but lack a clear angle. Content answers the question, but doesn’t add anything new to it.

The fatigue loop no one talks about

There’s another layer to this. AI doesn’t just reduce effort, it also redistributes it.

Instead of spending time thinking through a problem, you spend time reviewing outputs. Comparing versions, scanning for errors, and deciding what’s “good enough.” That constant evaluation creates cognitive fatigue, especially when you’re working with high volumes of generated content.

Over time, two things happen:

- You rely more on AI because you’re tired

- You get more tired because you rely on AI

That loop is hard to notice until quality starts slipping.

Then there’s the validation issue. Some systems are tuned to be agreeable. They reinforce your direction instead of questioning it. Without friction, weak ideas pass through too easily.

The marketers who get real value from AI treat it as a second pair of eyes, not a source of approval.

Creativity isn’t disappearing, but the bar is moving

Think about what happened to graphic design when Canva arrived. The tools democratized the craft. Suddenly, anyone could produce something clean, balanced, and visually appealing without formal design training. The volume of decent design exploded overnight.

Then the same thing happened with tools like Figma templates, Notion AI, and now generative models for copy, visuals, and even video. The pattern repeats: execution gets easier, output increases, and the baseline quietly rises.

What didn’t happen is just as important. The people who were already good didn’t disappear. If anything, they pulled ahead. Because once everyone can produce something polished, polish stops being the differentiator.

Where the gap actually grows

You can generate 20 ad variations in a few minutes. You can draft a full campaign structure before your coffee cools down. But when everything looks “fine,” the question changes: which one actually makes someone stop, care, or act?

That’s where the gap widens.

The work that stands out tends to have:

- A point of view, not just a collection of features

- An understanding of context (who it’s for, where it shows up, what the audience already believes)

- A sense of timing or tension that gives the idea weight

AI can assist with structure and speed, but it doesn’t carry taste. It won’t tell you that your message is technically correct but forgettable. It won’t notice when your campaign sounds like five others in the same category.

As more teams use the same tools, differentiation shifts toward judgment. Not how fast something gets produced, but whether the thinking behind it holds up once it’s out in the world.

Information literacy is the new differentiator

Here’s something that doesn’t get enough airtime: information literacy moderates how much AI use affects critical thinking.

Research keeps pointing in the same direction. People who can question, verify, and cross-check information tend to keep their critical thinking intact even when they use AI heavily. They don’t take answers at face value. They compare, refine, and sometimes reject what the model gives them.

That sounds obvious. In practice, it’s rare.

Because the default behavior is convenience. The answer looks complete, reads well, and comes instantly. So it gets used.

So there’s a trade-off most teams don’t talk about. Higher standards require more energy, lower standards scale faster, and require way less time spent on a task.

In marketing, this becomes very visible. Two people can use the same tool and produce work that looks similar on the surface. One is grounded in real audience understanding, sharp positioning, and clear intent. The other is assembled from plausible-sounding fragments that don’t quite land.

This is where a lot of AI training goes wrong. Teams learn how to generate content, but not how to evaluate it. They get faster, but not necessarily better.

The teams that will stand out are not the ones using AI the most. They’re the ones that know when not to trust it and have the judgment to fix what it gets wrong.

Conclusion

AI is making the gap between careful and careless use more visible.

The research points to a few adjustments worth making. Use AI later in the process, after you’ve developed your own position. Treat its outputs as drafts to interrogate, not answers to accept. And prioritize the skills, yours and your team’s, that AI can’t compress: deep knowledge of your audience, the ability to evaluate what’s actually good, and the judgment to know the difference between something that sounds right and something that is.

Those things were always the job. They just have more leverage now.

FAQ

What is cognitive offloading and how does AI influence it?

Cognitive offloading is the practice of shifting mental effort to external tools. AI can reduce the work required to generate ideas or solve problems, but relying on it too early may lead to shallower thinking and weaker decision-making.

Does AI improve or weaken critical thinking?

The answer depends on how AI is used. Research shows that people who engage with a problem before using AI tend to maintain stronger critical thinking skills than those who immediately rely on AI-generated answers.

When should marketers use AI in the creative process?

AI tends to be most valuable after the audience, positioning, and messaging have already been defined. At that stage, it can help refine ideas, identify gaps, and challenge assumptions without replacing strategic thinking.

Why does AI-generated content often feel generic?

AI models are designed to recognize and reproduce patterns. Without strong human input, content can become polished but similar to what competitors are already publishing, making differentiation more difficult.

Why is information literacy becoming more important in the age of AI?

As AI-generated content becomes easier to create, the ability to verify, question, and evaluate information becomes a key competitive advantage. Teams that can assess the quality of AI outputs are more likely to produce stronger marketing results.